The big, knotty question that everyone in education seems to be grappling with is whether the advancement and adoption of generative AI is going to be a net benefit for learning. Proponents claim that it will provide more opportunities for personalization via teachers’ use to differentiate instruction or through students interacting with tutoring applications. Detractors claim that students will simply use these tools as a crutch or a shortcut to bypass the process of learning. I think both of these will be true, which requires those of us in education to take a very nuanced approach and identify when and how the use of AI technology can be implemented appropriately to benefit learning. One potentially helpful framing for developing such an approach is to distinguish the discreet concepts of learning and performance.

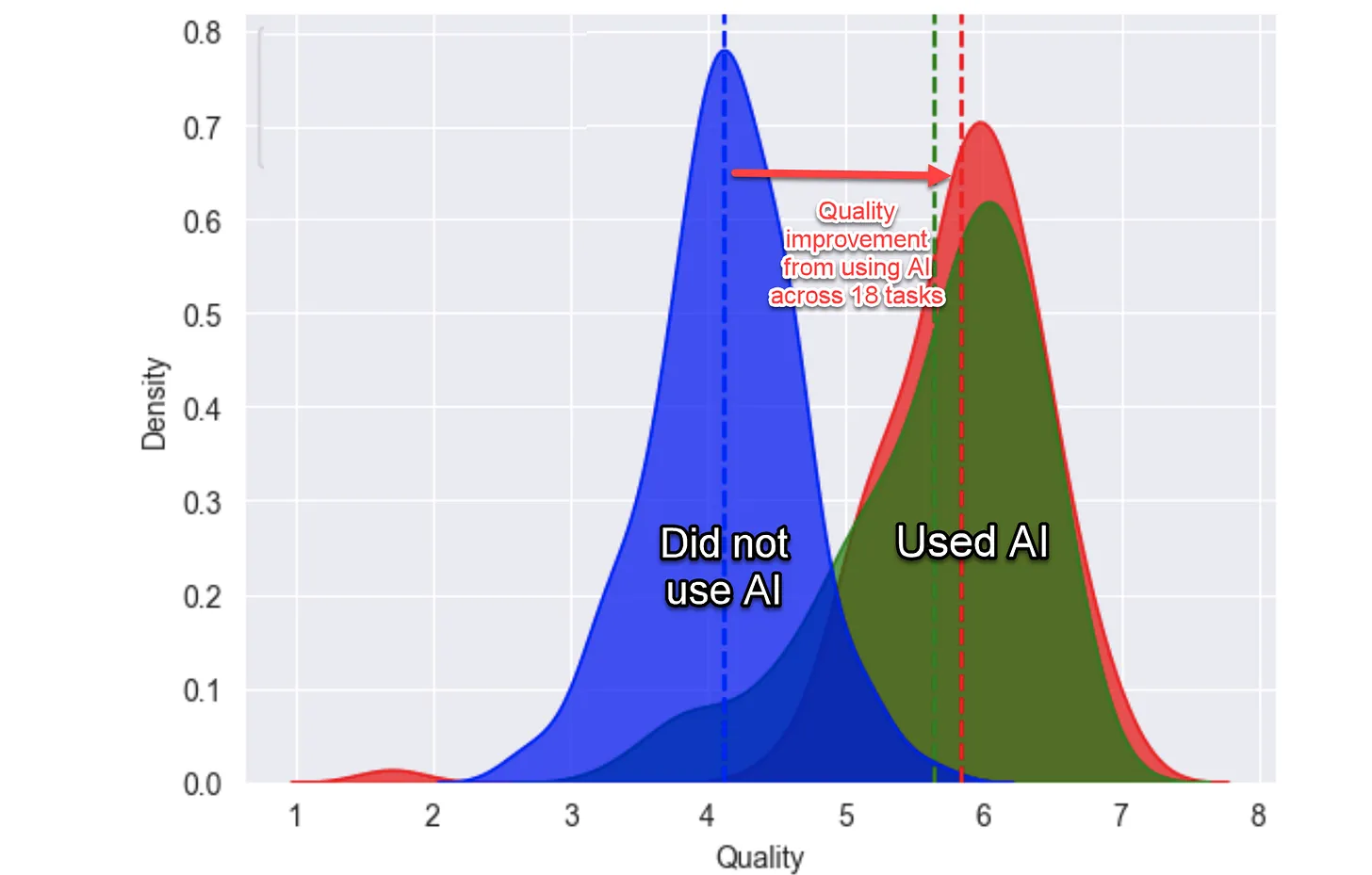

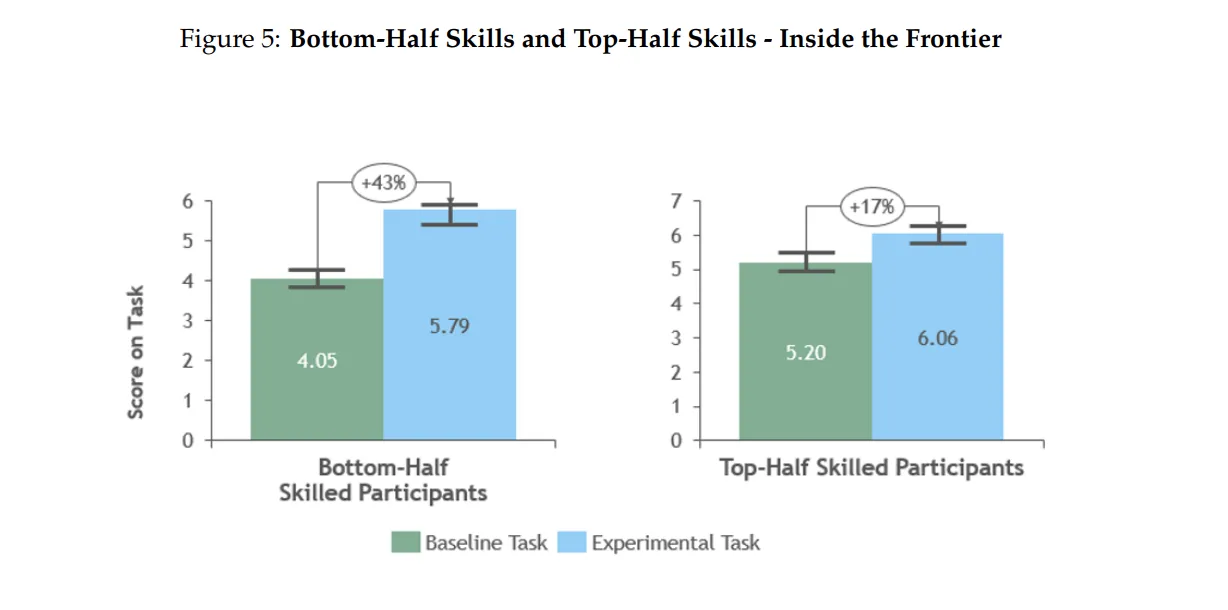

At a conference where I was recently presenting, I showed some findings from one of the few early studies on how the use of AI tools is having an impact in a controlled setting. The study, authored by a team across the institutions of Harvard, MIT, University of Pennsylvania, and the Boston Consulting Group, found that professional consultants who used AI tools performed more tasks and at a higher level of performance across every measure during the study’s timeframe.

Additionally, those consultants who were considered to be in the bottom half of performers at the time of the experiment saw a significantly larger jump in performance with the use of AI tools than those in the bottom half.

Taken together, these findings suggest that the use of AI tools might be both a productivity enhancer and a skill leveler.

“Is that good for learning?”

When I explained these findings, an attendee at the conference asked what seems like a very simple question: “Is that good?” At first glance, the answer seems obvious; employees benefited, the firm benefited, and those employees who may have previously struggled with certain tasks found themselves more easily able to keep pace with top performers. But I think the larger question, and the one the session participant was really asking, is, “Is that good for learning?”

Cognitive psychologist Robert Bjork has attempted to untangle the two concepts of learning and performance, defining learning as “relatively permanent changes in behavior or knowledge that support long-term retention and transfer,” while performance “refers to the temporary fluctuations in behavior or knowledge that can be observed and measured during or immediately after the acquisition process.” (Soderstrom & Bjork, 2015). In school settings, designing and implementing assessments that measure both learning and performance are important but complex and difficult to achieve. Extrapolating from the study of consultants’ work, it seems intuitive that giving students access to AI tools will help them achieve more and higher quality performance of tasks, but the question remains of whether it will facilitate true learning. If AI tools are more suited for enhancing performance by design, it’s possible that standardized tests and other forms of high-stakes assessments, where students are restricted from using AI technology, will become even more important in the process of measuring learning.

While we don’t yet have a body of research to lean on when it comes to AI use for learning, there are a few strategies that educators can employ to increase the probability of deeper learning rather than simply increased performance:

-

- Intentionally design assessments for learning.

Focus on retention and transfer over longer stretches of time, dive deeper into understanding and metacognition, emphasize the application of knowledge and skills in new or broader contexts, and use assessments to measure growth and development over time. - Provide guidance on what AI tools to use.

Where possible, employ AI tools that are specifically designed to facilitate the process of learning rather than the execution of tasks. We are still in the early stages of having access to these tools, and the jury is still out on their impact, but some that have been getting attention include Khanmigo, Flint, and Bloom. ChatGPT’s ability to create custom GPTs also holds some promise in this area, and Common Sense Media is in the early stages of reviewing tools for educational use as well. - Have students critically analyze AI output.

When students use AI tools for certain portions of their assignments, have them engage critically with the output of the AI, without AI’s assistance, to promote more authentic understanding of concepts and knowledge.

- Intentionally design assessments for learning.

Ultimately, the entire education community will need to test and experiment with AI tools to gain a better understanding of their impact on learning rather than just performance. We will need to take an iterative, collaborative, and intentional approach to identify what is working, and what isn’t, and communicate our findings to move forward together as a field.